Are Flowcharts and Pseudocode Helpful?

Flowcharts and pseudocode are GCSE staples, but how useful are they? And why isn't pseudocode like it used to be?

Both pseudocode and flowcharts appear in GCSE Computer Science papers, so we can't avoid them, but are they something that you only learn for exams, like stem and leaf diagrams in GCSE maths, or are they actually useful?

Flowcharts

Flowcharts are both cumbersome and time-consuming to draw, but are a staple of Computing courses. When I was at school in the 80s, before Shapes in Word and other tools, a number of us carried flowchart stencils to speed up the process - although the description of our decisions (as we called selection in those days) would rarely fit in the diamond that these would produce.

When I worked in the software industry, for a large chunk of the 90s, I don't think I ever saw a flowchart being used by a programmer - although they were used to describe our support procedures. As A level students may have discovered, it's quite difficult to describe anything other than a very simple program using a flowchart because there will be lines all over the place and links between multiple pages.

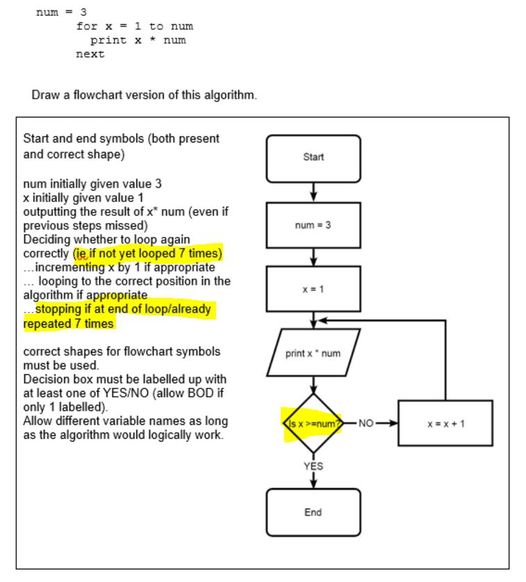

There aren't only practical issues, though. The question below was recently discussed in a Facebook group and highlights one of the main problems with flowcharts:

The "pseudocode" at the top shows what is described in GCSE terminology as a count-controlled loop, but the flowchart itself is actually showing a condition-controlled loop. Worse than that, the condition is checked at the end, so it's effectively a repeat... until loop, which most students that use Python won't have encountered before.

The purpose of pseudocode and flowcharts is to allow programmers to think about the solution in a (programming-) language-independent way, but if they're incapable of representing one of the most common programming constructs, then surely they're not that useful?

Can they ever be useful? I often use Scratch to introduce students to new programming concepts, such as procedures and recursion, because they're easier to visualise and the benefit can be clearer. Similarly, flowcharts can make it easier to understand the flow through loops and decisions.

Pseudocode

So pseudocode is supposed to represent the program in a language-independent way, but does it? I suspect that three programmers who are confident in C, Python and Lisp would produce solutions that are different, and if asked to write pseudocode first, would produce pseudocode that was similar to their intended method in their favourite programming language. For example, I've been programming since 1981, but (apart from brief experimentation with Lisp in the 80s and when learning about data abstraction and different structures as a student) had never really used a list before I first used Python in about 2013. Any pseudocode I used, therefore, would have been unlikely to include a list.

A particularly strange development is the introduction of formal pseudocode languages like those used by GCSE exam boards. I had a recollection that we wrote pseudocode in plain English when I was at school...

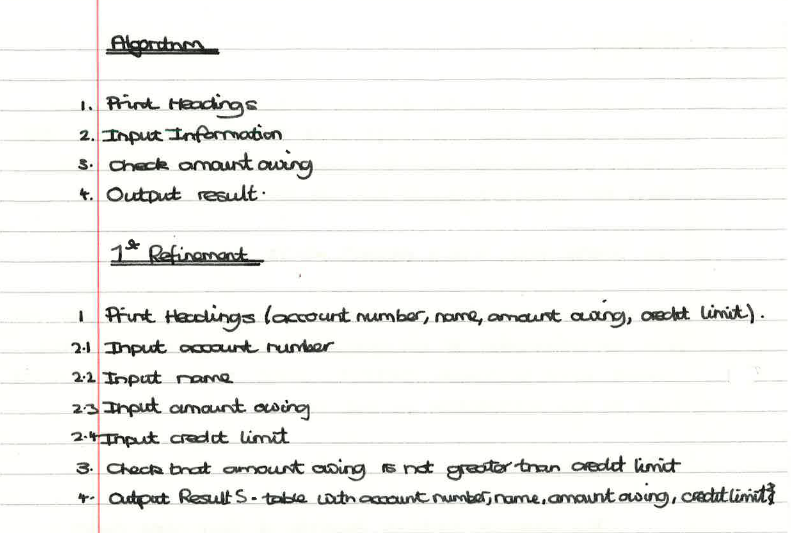

...then, earlier this year, as I was putting away the Christmas decorations, I found my O level Computer Studies notes in the loft.

As well as writing algorithms in plain English, we started off with only a few high-level steps and then "zoomed in", adding extra details in stages we called "refinement" - a bit like students did with Data-Flow Diagrams in the days of A level ICT. Here's a short example:

I rather like that approach - it illustrates the decomposition of a problem. The idea is that you think of the big steps - e.g. input the data, do some calculations, output the answer - but then you zoom in and say, "What data do I need?", "What are the calculations required?", "How do I format the output?", etc. That seems to be a more useful approach than learning an Exam Reference Language, and most of time, jotting down the zoomed-in detail of one of the sections is all that an experienced programmer will need to do.

The other benefit of using this approach over a formalised pseudocode is that you can express algorithms for things other than computer programs. My son is in year 2 and told me recently that he'd been doing Computing in school. When I asked what that involved, he said "We wrote some instructions for getting dressed". That lends itself well to "refinement", e.g. 1 put pants on, 2 put socks on, then 1.1 put left foot through left hole, 1.2 put right foot through right hole, 1.3 pull up, 2.1 put left sock on, 2.2 put right sock on, etc.

Other Diagrams

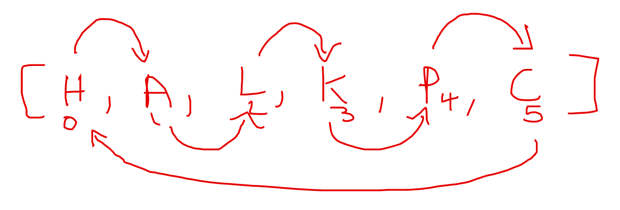

While I almost never use flowcharts when I'm writing a program myself, that's not to say that diagrams in general aren't useful. In programming lessons, and when making things myself, I often make informal diagrams to illustrate a particular concept, and that can help with the coding. In the last programming lesson I taught (an hour ago), we looked at an OCR past paper question that required students to write an algorithm (which we did as a practical programming exercise) to rotate people a number of places around a circular table.

Having realised that (at GCSE level) there are no circular data structures, the students appreciated that if you stored the people in an array, the key to the solution was shuffling the names (the diagram shows only initials to save space) along the array.

The diagram shows both what you need to do, the order you need to do the shuffling (i.e. right to left, otherwise values are overwritten), and the problem of what happens when you get to the end. We also used the same type of diagram to show that things get significantly more complex if you want to move the people more than one place.

We have a saying in our family that "doing things 'properly' is for people who don't know what they're doing". In the same way that expert bakers don't slavishly follow recipes, confident programmers don't need to produce flowcharts and pseudocode for every program, but there are occasions on which they can be helpful.

This blog was originally written in November 2021.